Member-only story

Character Encoding and the Internet

My partner ran into a character encoding issue at work recently that we were talking about over dinner (you all talk about things like character encoding at dinner too, right?), and I realized I knew less about it than I wanted to. And as usual, one of my favorite ways to learn something new is to write a blog post about it, so here we are!

High level, we know that all data transferred over the internet in packets made up of bytes — so when it comes to character encoding, the question becomes: how do all the characters that our users enter into forms, or that we pull out of or send to the database, or receive from external API calls, become bytes that can be passed over the network?

We need a way to turn all of these numbers and letters and symbols into bits — this is called encoding.

Character Sets

A character set is not technically encoding, but it is a prerequisite to it. It represents the set of characters that can be encoded, and usually assigns them a value that is converted into bytes during the encoding step. ASCII and unicode are examples of this.

ASCII

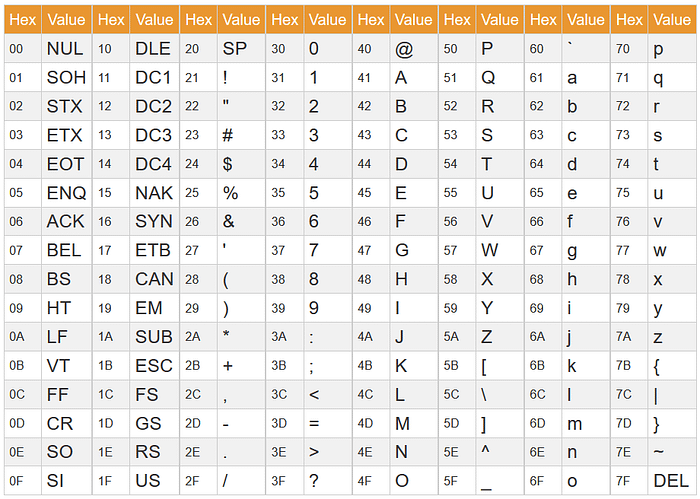

ASCII was one of the earlier character sets. Here’s a table that shows how characters are included:

The problem with this is pretty immediately obvious, isn’t it? What if you want to use a non-latin alphabet? What if you want to use a special character (for example: ©) You can’t. So while ASCII is still used (for example, all URLs must use only ASCII characters), it’s really not very useful for transferring data across networks.

Unicode

Enter unicode. Unicode was developed as a global standard to encode all characters in all languages (not to mention emoji and other special characters). Unicode actually does use the same character mappings as ASCII for the characters that are included in it, it just includes a lot more (a lot — there are 1,114,112 possible unicode characters. You can see all of them here, if you’re curious!).

Encoding

Encoding is how we actually convert these mapped character values into bits that can be transferred over networks.